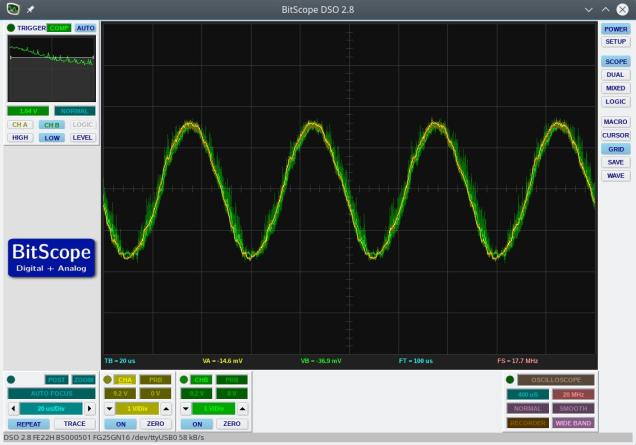

This example uses a 4th order Butterworth low pass filter that was designed in GNU Octave. The sampling rate was set to 200kHz and the cut-off frequency was set to 20kHz. The filter output at 20kHz is shown below and, as expected, shows an attenuation of 0.7 (approx the square root of 2).

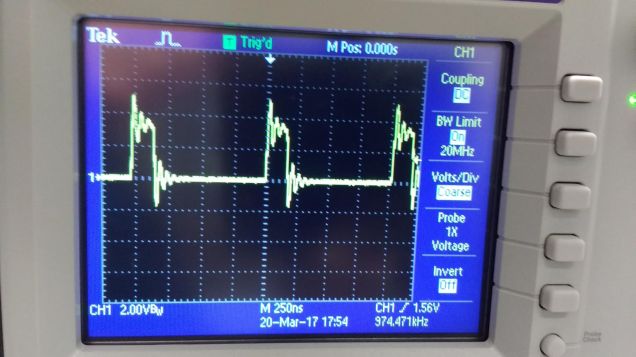

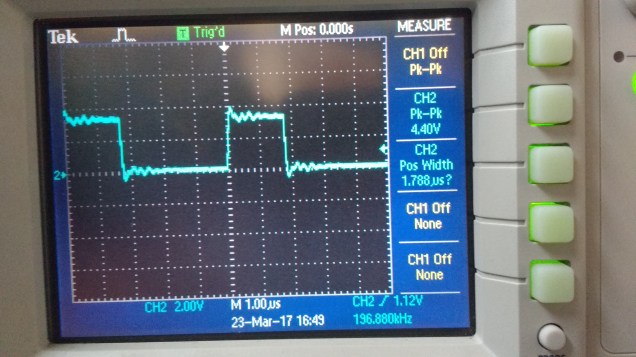

Various attempts were made to optimize the performance of the filter. The execution time was measured by flipping an output bit either side of the filter code. An oscilloscope trace of this output is below.

As can be seen, the execution time is 1.78 microseconds. This is pretty quick given that floating point numbers are being used. I found that my attempts to manually improve the performance made no significant difference compared to what the compiler’s optimizer could do. I also found that gcc’s -O2 optimization setting produced a faster filter than -O3. The filter shuffles data in the input and output delay lines. This may be considered less than optimal but, given that the order of the filter is low, it probably would make little difference to use circular buffers (and manage buffer state etc).

Code can be downloaded here on Github and should be easily compiled on Linux/Windows/Mac